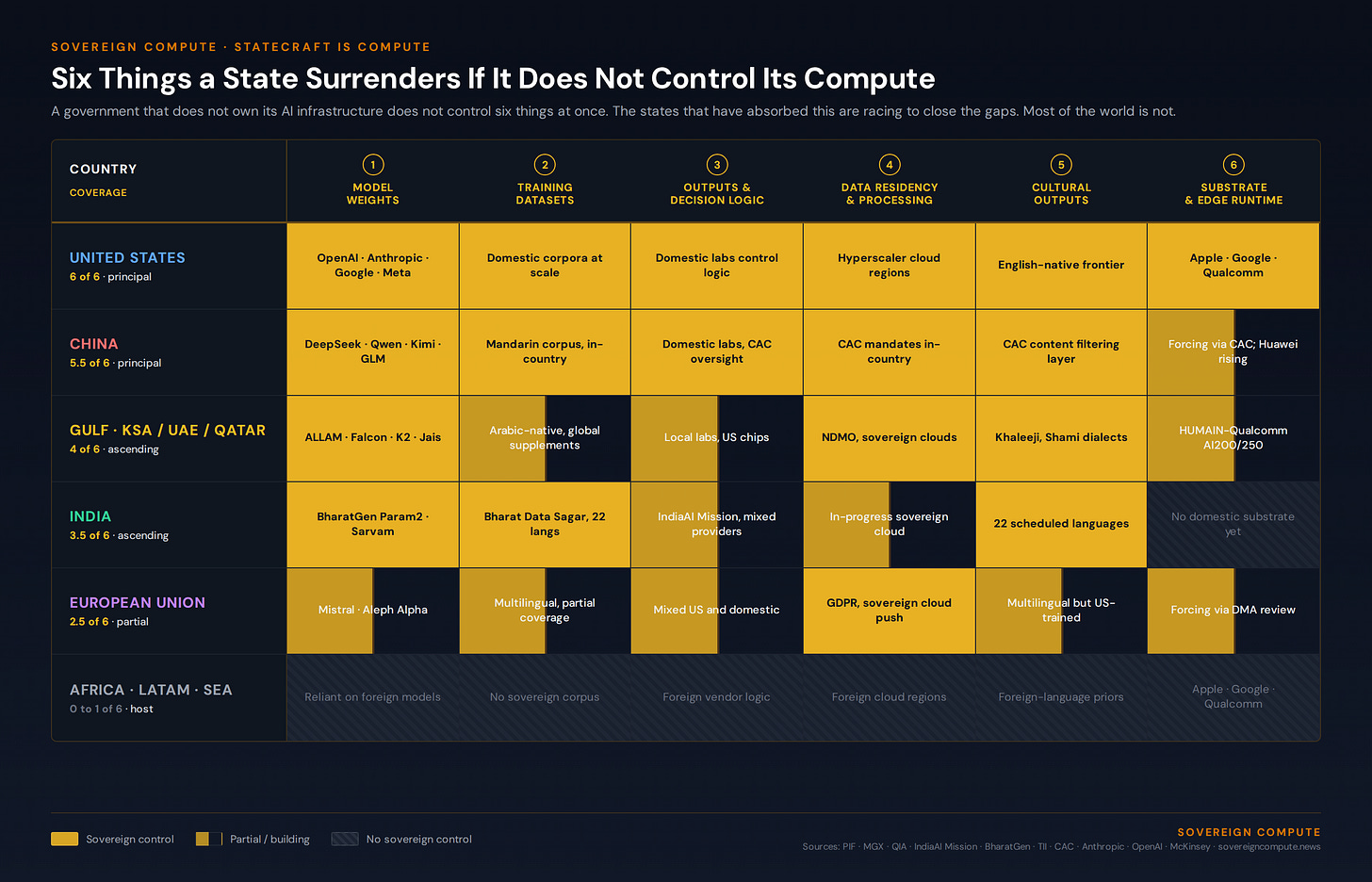

Statecraft Is Compute. Six things a state surrenders if it does not control its compute, scored across the major players.

The United States, China, Singapore, Saudi Arabia, the UAE, Qatar, have moved from regulating AI to building it. The chair of the AI vehicle is increasingly the head of state.

Sovereignty has always required an instrument the state could capitalise at scale. Land, then navies, then reserves, then deterrents. Compute is the next one.

A state that does not control the AI infrastructure its citizens use does not control six things at once.

It does not control the model weights, which means it cannot guarantee the system will behave the same way next month as it does today. It does not control the training datasets, which means the priors baked into the model on history, religion, geography, and law were chosen elsewhere. It does not control the outputs, which means decisions affecting taxation, healthcare, border control, and education are made through a black box owned by a foreign vendor. It does not control where the data is processed or where the analytics are run, which means citizen records, financial flows, and intelligence inputs cross jurisdictions every time a query fires. It does not control the cultural outputs, which means what a child reads, what a journalist drafts, and what a civil servant summarises is shaped by a model trained to a different national context. And it does not control the substrate, which means in a disruption it cannot fall back on the distributed compute already in citizens’ pockets, their phones, because the orchestration layer sits on someone else’s cloud.

That is the inventory of what is at stake. The Anthropic-Pentagon dispute makes it concrete.

In late 2025, the United States Department of Defense pushed Anthropic to drop two carve-outs in its usage policy: a prohibition on mass domestic surveillance, and a prohibition on fully autonomous weapons. Anthropic refused. In February 2026, the Secretary of War designated Anthropic a “supply chain risk,” a label previously reserved for foreign adversaries. The Court of Appeals for the DC Circuit, in April 2026, declined to lift the designation while noting that doing so “would force the United States military to prolong its dealings with an unwanted vendor of critical AI services in the middle of a significant ongoing military conflict.” OpenAI moved within hours of the conflict’s start to secure a Pentagon agreement without Anthropic’s carve-outs. Treasury, State, and Health and Human Services were directed to move off Claude.

The Pentagon is the sole hyperpower’s defence ministry. Anthropic is one of three frontier US AI labs. They sit inside the same jurisdiction and the same political alliance. They still hit an impasse over use cases the state considered essential and the lab considered unacceptable, in the middle of an active war. The state had to find another vendor at speed.

Now translate that to any other state. A foreign government using Claude, ChatGPT, Gemini, or Grok for citizen-facing services has no equivalent recourse. It has a terms-of-service agreement. In October 2025, OpenAI updated its usage policy to ban “tailored advice that requires a license,” covering legal, medical, and financial guidance. Any government that had built citizen-facing public service applications on top of that API woke up to find entire categories of use potentially newly prohibited. APIs can be revoked. Cloud regions can be quarantined under extraterritorial law. Model versions can be retired without notice. Usage policies can change unilaterally.

This is what a state actually loses when its AI runs on someone else’s stack: operational continuity, jurisdictional control, cultural authorship, and the ability to shape its own citizens’ interactions with the technology that increasingly mediates public life.

The states that have internalized this have moved from regulating AI to building it.

When the Crown Prince of Saudi Arabia chairs the board of an AI company, the company is not a portfolio holding. HUMAIN was launched in May 2025 as a wholly owned subsidiary of the Public Investment Fund, with Mohammed bin Salman as chair and Saudi Aramco as a minority shareholder. The mandate runs across the entire AI stack: data centres, cloud, the ALLAM Arabic-language model, and consumer applications. Disclosed commitments include a 600,000-GPU agreement with Nvidia, a $5 billion AI zone with AWS, a $3 billion stake in xAI, and a target of three to six gigawatts of compute capacity that maps to between $90 billion and $300 billion of total infrastructure spend at full build-out.

The same architecture is showing up across the Gulf and Asia. Qatar’s QIA created Qai in December 2025 as a wholly owned AI company, then formed a $20 billion joint venture with Brookfield to develop integrated compute facilities, with explicit support from the Government of Qatar. Abu Dhabi’s MGX, with a $100 billion AUM target, is chaired by Sheikh Tahnoun bin Zayed, the UAE’s National Security Adviser. Singapore’s Temasek joined the AI Infrastructure Partnership in December 2025. Kuwait’s KIA joined six months earlier. Canada launched a $2 billion AI Sovereign Compute Infrastructure Program. The chair of the AI vehicle is, increasingly, a head of state or a national security official. The capital is sovereign capital. The mandate is written by the state.

Six things a state surrenders if it does not control its compute, scored across the major players.

Three large states have absorbed this and are paying for it accordingly. Each pays a different way.

The American model runs on hyperscaler balance sheets. Microsoft, Alphabet, Meta, and Amazon are tracking roughly $700 to $725 billion in combined 2026 capex, almost double 2025. Stargate, the announced $500 billion vehicle led by SoftBank and OpenAI with Oracle and MGX, sits on top, financed through SoftBank borrowing, JPMorgan project debt, and Gulf sovereign equity. The state directs through procurement, export controls, and tax policy. The Anthropic case shows the limit: when state and lab disagree on use cases, the state must find a different lab.

The Chinese model runs on a different instrument set. Beijing’s state venture capital guidance fund, announced in March 2025, targets nearly 1 trillion yuan over twenty years. Government-directed AI investment hit roughly ¥345 billion in 2026, with another ¥258 billion in corporate R&D at Alibaba, Tencent, and Baidu. Top Chinese internet firms are projected to invest more than $70 billion on AI infrastructure in 2026. The instruments are guidance funds, state-owned banks, and direct mandates to listed national champions.

The Gulf model is the third and most explicit. There is no equivalent of Microsoft or Tencent on the cap table. There is no $50 trillion debt market to draw from. What the Gulf has is sovereign capital with patient duration, political alignment with the state’s mandate, and energy reserves that translate directly into compute capacity. The wealth fund becomes the principal vehicle because nothing else fits the shape of the problem.

What the three share matters more than what divides them. Each is a structure that lets the state set the terms of the stack. The US sets terms through export controls and procurement. China sets terms through ownership and guidance. The Gulf sets terms through wealth fund mandate and chairmanship. The form is different. The function is the same. One contrast sharpens the picture. As Nathan Lambert observed after visiting most of the leading Chinese labs in May 2026, Meituan, Xiaomi, and Ant Group, none of them traditionally AI companies, all build their own general-purpose LLMs. The equivalent companies in the US buy services. Ownership mentality has saturated the Chinese corporate layer in a way it has not in the American one.

Now the rest of the world. The EU’s InvestAI initiative targets €200 billion through 2030, with €50 billion public and €150 billion pledged by Blackstone, KKR, EQT, and other private investors who can walk away if returns disappoint. The UK’s Sovereign AI sits inside a private vehicle backed by Accenture, Palantir, Dell, and Nvidia. India’s IndiaAI Mission has over 38,000 GPUs subsidised and targets $200 billion under Mission 2.0. France has €109 billion announced, much of it private. Most of Africa, Latin America, and Southeast Asia have none of these instruments at scale. They will host other people’s stacks.

There is one architectural development that changes this map.

Smartphone penetration in Africa is projected to reach 81 percent of mobile connections by 2030, up from 51 percent in 2023. Asia-Pacific and Latin America already exceed 80 percent. Modern phones can run capable language models on-device. A country that orchestrates inference across its citizens’ phones has a distributed compute layer that does not depend on any single foreign cloud region staying online. The catch is the substrate underneath, where iOS, Android, and the on-device AI runtimes are owned by US firms. A few significant states are already addressing it. China requires the Cyberspace Administration to approve any model running on a foreign device sold in the country, which is why Apple Intelligence ships in China only with Alibaba’s Qwen and a state-mandated filtering layer. Saudi Arabia’s HUMAIN signed with Qualcomm to integrate ALLAM into the AI200 and AI250 silicon rack, building the inference layer at the chip level. The UAE’s Technology Innovation Institute released Falcon-Edge as a 1.58-bit model designed to run on edge devices outside the major OS runtimes. The EU is using the Digital Markets Act review to bring AI assistants under interoperability obligations.

In other words, the substrate is contestable. The states with the means are contesting it. Countries that build this layer over the next five years will have an answer to disruption that does not necessarily require a hundred-billion-dollar wealth fund.

Governments are becoming infrastructure companies because infrastructure has become a precondition for governing at scale. The chair of the AI vehicle can now be the head of state because the AI vehicle is where part of the state's work now gets done. Sovereignty has always required an instrument the state could capitalise at scale. Land, then navies, then reserves, then deterrents. Compute is the instrument this generation of statecraft is built on.

SOURCES

PIF (HUMAIN structure, Aramco minority stake) · Arab News (NIF and HUMAIN $1.2B framework) · House of Saud (PIF 2026 to 2030 strategy, FII Miami) · QIA / Brookfield press releases (Qai $20B JV, December 2025) · Mubadala / AGBI / Bloomberg (MGX structure, $100B AUM target) · Reuters / Salem Radio Network (Temasek and KIA into AI Infrastructure Partnership) · OpenAI press releases (Stargate sites, financing structure) · The Information / WSJ (Stargate equity contributions) · CNBC and Wikipedia (Anthropic-DOD dispute) · Anthropic public statements (February 2026) · OpenAI usage policy update (29 October 2025) · CNN / NDRC (China state venture capital guidance fund, March 2025) · Second Talent (China 2026 AI investment statistics) · Futurum / CreditSights (hyperscaler 2026 capex aggregates) · TechPolicy.Press / European Commission (InvestAI €200B structure) · GSMA Mobile for Development (smartphone penetration projections) · McKinsey (Sovereign AI agenda, December 2025) · Acadlore JORIT (peer-reviewed sovereign LLM bias study, 2025) · Nathan Lambert, Notes from inside China’s AI labs, Interconnects, 7 May 2026 (https://www.interconnects.ai/p/notes-from-inside-chinas-ai-labs) · TechCrunch and SCMP (Apple-Alibaba Qwen partnership, China CAC requirements) · Middle East AI News (HUMAIN-Qualcomm AI200 / AI250 deployment, October 2025) · TII press releases (Falcon-Edge BitNet 1.58-bit model) · ComplianceHub.Wiki and European Commission (DMA two-year review, AI services and cloud, April 2026).

A NOTE ON INDEPENDENCE

All opinions shared in this newsletter are my own and do not reflect the views of dmg events, ADIPEC, or any affiliated organizations. This is personal analysis, not institutional positioning.