Your AI Infrastructure Map Is Already Obsolete

The biggest capital deployment since the post-war era just shifted geography. Here's where the money is actually going.

Global data center capex is on track to approach $1 trillion in 2026.

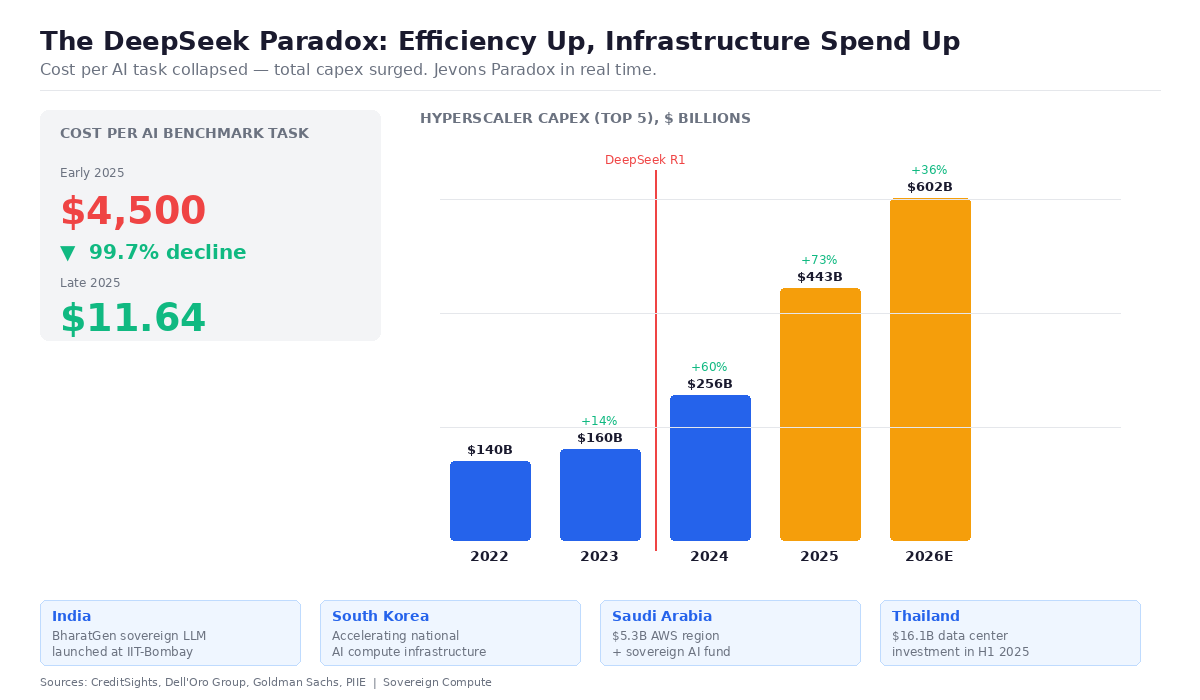

Dell’Oro Group’s latest forecast, published last week, projects the top four US hyperscalers — Amazon, Microsoft, Google, and Meta — will spend close to $600 billion combined this year, up sharply from prior years. Capital intensity across these companies has reached 45–57% of revenue, levels historically associated with utilities, not tech firms. GPUs and custom AI accelerators now account for roughly a third of total data center capex. By any measure, this is the largest infrastructure buildout since the post-war industrial era. But the geography of that buildout has fundamentally shifted over the past twelve months, and most infrastructure strategies haven’t caught up.

The shift traces back to an economics concept from 1865. When DeepSeek released its V3 and R1 models in late 2024 and early 2025, demonstrating that frontier-capable models could be built for a reported $5.6 million in GPU compute costs — a fraction of what Western labs assumed necessary, even after accounting for excluded R&D and infrastructure overhead — the immediate market reaction was panic: if AI is getting cheaper, who needs all this infrastructure?

The answer turned out to be everyone.

The Peterson Institute confirmed it this month: the cost to achieve a comparable score on a challenging AI benchmark plunged from $4,500 per task to $11.64 over the course of 2025. Yet AI usage dwarfed those efficiency gains. Anthropic’s annualized revenue run rate climbed from roughly $1 billion to over $9 billion in twelve months. OpenAI projected $20 billion in 2025 revenue, up from around $3.7 billion in 2024. Google Cloud revenue grew 48% year-on-year. Economists call it the Jevons Paradox — when efficiency drops cost, consumption explodes.

What changed isn’t the scale of the buildout. It’s who’s building and where.

Before 2025, the infrastructure narrative was centralized: US hyperscalers stacking GPU clusters in Virginia, Texas, and Oregon.

After efficiency breakthroughs collapsed the capital threshold for sovereign AI, the buildout fragmented globally.

India launched BharatGen, a government-backed consortium at IIT-Bombay developing sovereign multilingual models, with Sarvam AI targeting Indian-language benchmarks. South Korea declared its intention to become a top-three AI power. Saudi Arabia committed over $5 billion for a new AWS region alongside its sovereign AI fund. Thailand attracted over $10 billion in data center investment pledges in just six months, with commitments from AWS, Google, and TikTok’s parent ByteDance.

The demand profile shifted too.

Training runs happen once. Inference — running models in production — happens continuously, everywhere. As open-weight models like DeepSeek-R1, Llama, and Falcon proliferate, they need hosting infrastructure in Bangkok, Mumbai, Riyadh, and São Paulo — not just Ashburn, Virginia. Even the Stargate Project adapted: what began as an aspirational $500 billion US-centric initiative has evolved toward distributed “Sovereign Stargate” nodes in markets including Norway and the UAE. The architecture of the buildout is following the architecture of the models — distributed, modular, geographically dispersed.

Dell’Oro projects that while the top four US hyperscalers will represent about half of global data center capex by 2030, emerging AI model builders, neo-cloud providers, and sovereign cloud initiatives are growing at significant rates. Over 50 GW of new data center capacity is expected over the next five years.

The buildout didn’t shrink, but moved. For energy executives and infrastructure investors, the next wave of power purchase agreements, grid interconnection requests, and site selection decisions won’t cluster in Northern Virginia. They’ll show up in Thailand’s Eastern Economic Corridor, India’s emerging compute hubs, the Gulf, and a dozen other markets converting efficiency breakthroughs into sovereign compute capacity. The firms still modeling AI demand as a US-centric phenomenon are working with last year’s map.

Sources: Dell’Oro Group (Feb 2026), Peterson Institute for International Economics (Feb 2026), Epoch AI, SemiAnalysis, Reuters, TechCrunch