Who Answers When the Grid AI Agent Gets It Wrong?

Autonomous AI agents are already managing power flows and drilling wells. But who's liable, who's insured, and what happens when the AI optimizing the grid conflicts with the AI consuming from it?

The Anthropic-Pentagon confrontation last week demonstrated that the governance of AI models is a contested political space. Now extend that logic to agentic AI on critical infrastructure. The same model that a government might blacklist for political reasons could, in parallel, be managing grid stability for an allied country’s power system. The surface area for geopolitical disruption has expanded from data flows and chip exports to the operational control layer itself.

In 2024, more than fifty data centers in Northern Virginia tripped to backup generators simultaneously during a localized voltage instability event. PJM Interconnection’s automated grid controls — the Energy Management System and Automatic Generation Control — caught the cascading failure and rebalanced the system before it reached consumers. No blackout. No headlines. Human operators were aware of the alarm state, but the micro-second rebalancing decisions were made by the system before human intervention was possible. The operators acted on AI-synthesized data. The critical calls had already been made.

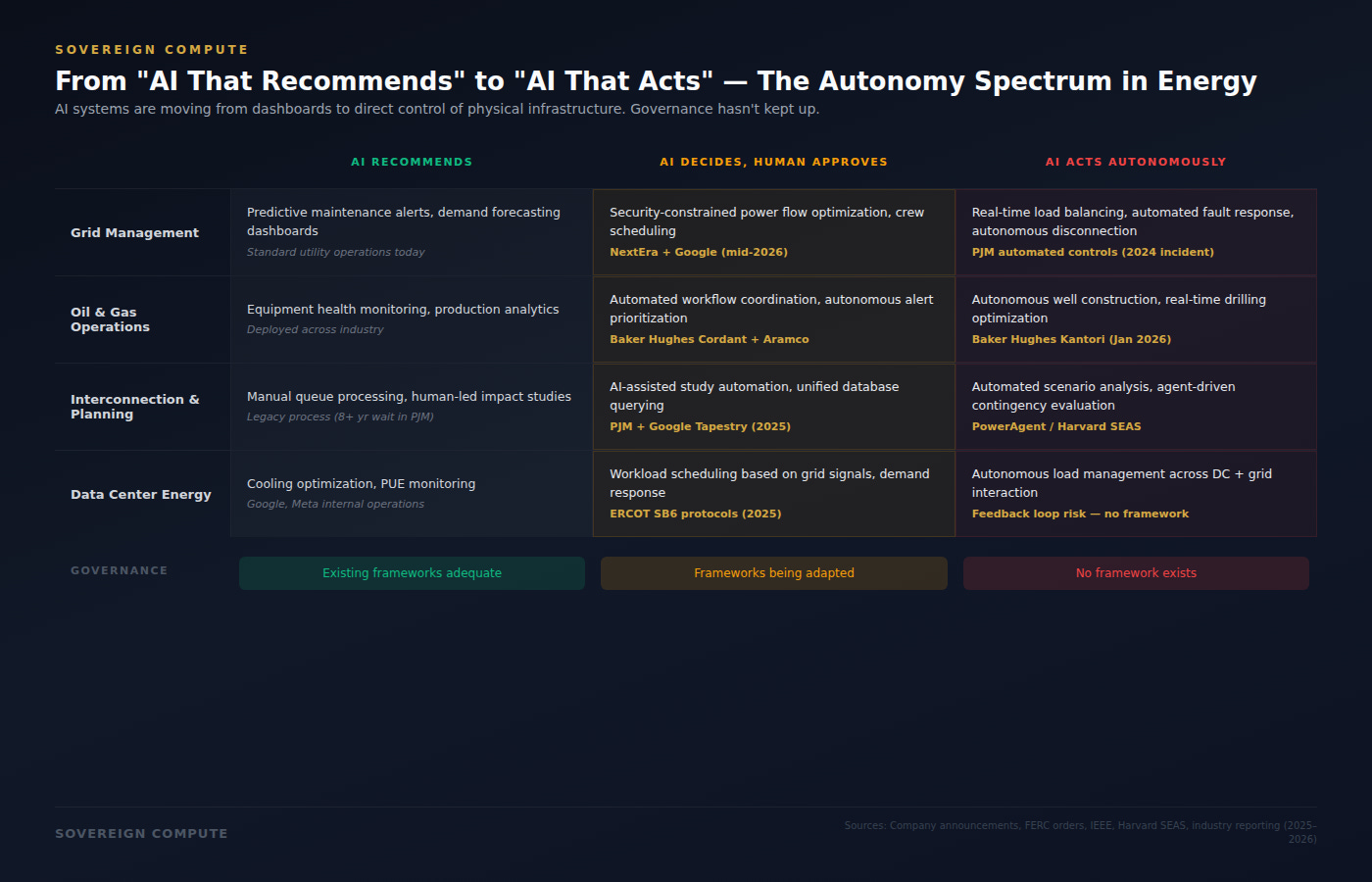

The entire debate about AI and energy has so far mainly focused on consumption — how many megawatts data centers need, where the power comes from, who pays for the grid upgrades. That’s a critical problem and a clear vulnerability, but it’s yesterday’s problem. The next one is harder: AI is beginning to fully operate the systems that produce, distribute, and manage energy. And the governance, liability, and insurance frameworks required for that shift do not yet exist.

ALREADY IN PRODUCTION

The deployments are further along than most realize. In December 2025, NextEra Energy — America’s largest utility — announced a landmark partnership with Google Cloud to deploy agentic AI across its field operations and grid management. The system integrates Google’s generative and agentic AI with NextEra’s asset data to predict equipment failures, autonomously schedule crew deployments around weather and supply chain disruptions, and optimize power flows using security-constrained modeling. The first commercial product launches on the Google Cloud Marketplace in the second half of 2026. Google’s open-source forecasting models — TimesFM 3.0 for time-series and WeatherNext 2 for weather prediction — will feed directly into grid operations. NextEra raised its earnings guidance on the strength of it.

In oil and gas, Baker Hughes launched Kantori in January 2026, a unified autonomous well construction system that uses AI and physics-based models to optimize drilling operations with “limited human intervention.” Its Cordant platform, now deployed with Aramco across four booster gas compression stations in Saudi Arabia, uses autonomous asset management to automate decision-making across asset health, process optimization, and energy management. These agents are currently bounded by physics-informed constraints — they cannot rewrite their own safety parameters — but the direction is clear: the industry is moving from tools that recommend to systems that act. Separately, PJM Interconnection partnered with Google’s Tapestry unit in April 2025 to rebuild its interconnection process using AI, deploying the “Twin Thread” model to unify dozens of databases into a single system that project developers, grid planners, and operators can query in real time. ERCOT, the Texas grid operator, created an entirely new Enterprise Data and AI Reliability Office in January 2026, explicitly designed to manage the intersection of autonomous systems and grid reliability.

And at Harvard, Professor Le Xie’s PowerAgent community — the first open-source initiative for agentic AI in power systems, featured as the cover story of IEEE Power and Energy Magazine — is building the foundational architecture: AI agents that can launch grid impact studies, run contingency analyses, evaluate interconnection requests, and generate operator reports, all through natural language interfaces that interact directly with engineering software via Anthropic’s Model Context Protocol. The key design feature is a “flexible human-in-the-loop”: the agent presents results for review rather than executing autonomously on critical decisions. But the direction of travel is unmistakable. The loop is getting shorter.

THREE WIDENING UNADDRESSED GAPS

The technology is moving faster than the institutions designed to govern it. Three structural gaps are opening, and each one represents both a risk for infrastructure investors and a strategic question for policymakers.

The first is the liability gap. When an autonomous AI agent makes a decision that affects grid stability — rerouting power flows, disconnecting a load, dispatching a crew — who is accountable? Today, there is no clear answer. The AI developer? The utility that deployed it? The cloud provider hosting the model? The grid operator that approved the protocol? Existing regulatory frameworks were built for a world where human operators make decisions and are accountable for them. FERC’s December 2025 order directing PJM to establish new rules for data center colocation didn’t address autonomous AI operations at all — because the agency is still focused on where power flows, not on who or what decides how it flows. The gap between operational reality and regulatory capacity is widening every quarter.

The second is the insurance gap — and this may be the most consequential bottleneck. Major insurers, led by AIG, have begun pulling back from AI liability coverage entirely. AIG’s AI exclusionary rider became standard for mid-market policies in late 2025. Lloyd’s of London syndicates are writing specialty AI policies through new ventures like Testudo and Armilla, but these products are expensive, narrow, and explicitly exclude AI developers and vendors. Lloyd’s began requiring algorithmic audits as a prerequisite for these policies in February 2026. The core problem, as Lexology documented in January 2026, is that insurers view AI systems as “black boxes” whose outputs are neither deterministic nor consistent — and AI errors propagate across user populations simultaneously, creating what underwriters classify as catastrophe exposure rather than standard liability. Model drift has been flagged as an uninsurable risk. A single model update that miscalculates grid loads across a utility’s entire territory isn’t an error. It’s a systemic event. For energy companies deploying agentic AI on critical infrastructure, the inability to insure autonomous decision-making is becoming a practical deployment constraint — not a theoretical concern.

The third is the feedback loop. Agentic AI running inference at the edge — in substations, on pipelines, at wellheads — changes where power demand materializes on the grid. It’s not just that AI consumes energy; it’s that AI’s own operational decisions reshape the energy system it’s managing. ERCOT’s new rules mandate that large loads above 75 MW participate in demand response programs and can be remotely disconnected during grid emergencies. But what happens when the AI agent managing a data center’s workload is also the AI agent optimizing the grid that feeds it? The optimization objectives may not align. The data center wants uptime. The grid wants stability. When both sides of that equation are being managed by autonomous systems operating faster than human review cycles, the conflict resolution mechanism doesn’t exist yet.

THE SOVEREIGN QUESTION

For countries building sovereign AI strategies, this adds a layer of dependency that the “Three Models” framework identified but didn’t fully explore. When NextEra deploys Google’s agentic AI to manage its grid, or when Aramco runs Baker Hughes’s Cordant on its gas infrastructure, the intelligence layer controlling physical operations is provided by a foreign vendor. The Gulf states’ technological dependence isn’t just about chips and foundation models anymore — it extends to the autonomous systems managing their most critical infrastructure. India’s application-layer strategy assumes foreign models are neutral tools. They are not. They are increasingly autonomous decision-makers with direct authority over physical systems. Whose safety protocols govern an AI agent controlling a grid switch in Riyadh? Whose liability framework applies? Whose insurance covers the failure?

The Anthropic-Pentagon confrontation demonstrated that the governance of AI models is a contested political space. Now extend that logic to agentic AI on critical infrastructure. The same model that a government might blacklist for political reasons could, in parallel, be managing grid stability for an allied country’s power system. The surface area for geopolitical disruption has expanded from data flows and chip exports to the operational control layer itself.

THE DECISION WINDOW

Within five years, no energy grid of significant scale will operate without autonomous AI agents. The technology trajectory is clear, the commercial incentives are overwhelming, and the deployments are already in production. The question is whether the governance, liability, and insurance frameworks will be in place before the systems are operating — or whether, as with every previous infrastructure revolution, the institutions will arrive a decade late.

For infrastructure investors, the insurance gap is the immediate signal. Any energy investment thesis that assumes agentic AI deployment at scale needs to model the insurance constraint — because if critical infrastructure can’t be underwritten, it can’t be financed. For policymakers, the regulatory gap is the urgent one: FERC, ERCOT, and PJM are redesigning interconnection rules for a world of AI-driven demand, but none of them have established frameworks for AI-driven operations. For energy executives, the workforce question is existential but deferred: the shift from “AI that recommends” to “AI that acts” redefines what operators do, and most organizations are not preparing their workforce for this transition.

The Virginia incident in 2024 ended well. The autonomous system caught the failure. But the next incident may involve an AI agent making a decision that a human operator would not have made — and when that happens, the question won’t be whether the technology works. It will be who answers for it. That question is unanswered today. And the systems are already running.

SOURCES

NextEra Energy / Google Cloud, “Landmark Strategic Energy and Technology Partnership,” Dec. 8, 2025

Baker Hughes, “Launch of Kantori™ Autonomous Well Construction Solution,” Jan. 28, 2026

Baker Hughes / Aramco, “Cordant™ APM Deployment Across Four BGCS,” Nov. 19, 2025

PJM / Google Tapestry, “Twin Thread” AI-driven interconnection partnership, Utility Dive, Apr. 10, 2025

ERCOT, “Strategic Organizational Changes,” Enterprise Data and AI Reliability Office, Dec. 12, 2025

FERC Order No. 2025-A, “Directs PJM to Create New Rules for Co-located Load,” Dec. 18, 2025

ERCOT / Texas SB6, large load interconnection rules (75 MW threshold), effective 2025

Zhang & Xie, “PowerAgent: A Road Map Toward Agentic Intelligence in Power Systems,” IEEE Power and Energy Magazine, vol. 23, no. 5, Sept.–Oct. 2025 (cover story)

Harvard SEAS, PowerAgent Community launch, PAI Symposium, May 2025

Kyndryl / PJM / Dominion Energy, “How AI is Reshaping Utilities and the Power Grid,” Feb. 2026 (Virginia incident)

Yale Clean Energy Forum, “Grid Modernization for Data Center and AI Loads,” Nov. 12, 2025

Lexology / Wiley, “When Insurance Won’t Cover AI,” Jan. 13, 2026 (model drift as uninsurable risk)

Metropolitan Risk Advisory, “Major Insurers Are Pulling Back from AI Liability,” Nov. 24, 2025 (AIG exclusionary rider)

Intelligent Insurer, “What Insurers Must Understand Before Underwriting the Next AI-Driven Catastrophe,” Feb. 3, 2026

Lloyd’s of London, algorithmic audit requirement for AI specialty policies, Feb. 2026

American Bar Association, “The Evolving Landscape of AI Insurance,” 2025

EDITORIAL NOTE

The Virginia data center incident is reported by Kyndryl citing PJM and Dominion Energy sources. PJM records indicate approximately 52 data centers were involved; the article uses “more than fifty” for accuracy. The micro-second rebalancing was supervised autonomy: operators were aware of the alarm state, but the system acted before human intervention was possible. The “three gaps” framework is the author’s analytical construct. Agentic AI deployments described are based on company announcements and press releases; actual operational scope and autonomy levels may differ from marketing descriptions. Baker Hughes’s Cordant agents are bounded by physics-informed constraints and cannot rewrite their own safety parameters. Insurance market analysis draws on legal and industry reporting; specific policy terms vary by carrier and jurisdiction. Gulf and India references draw on the author’s “Three Models of Sovereign AI” framework (Sovereign Compute, Feb. 2026).