Three Models of Compute-Energy Integration

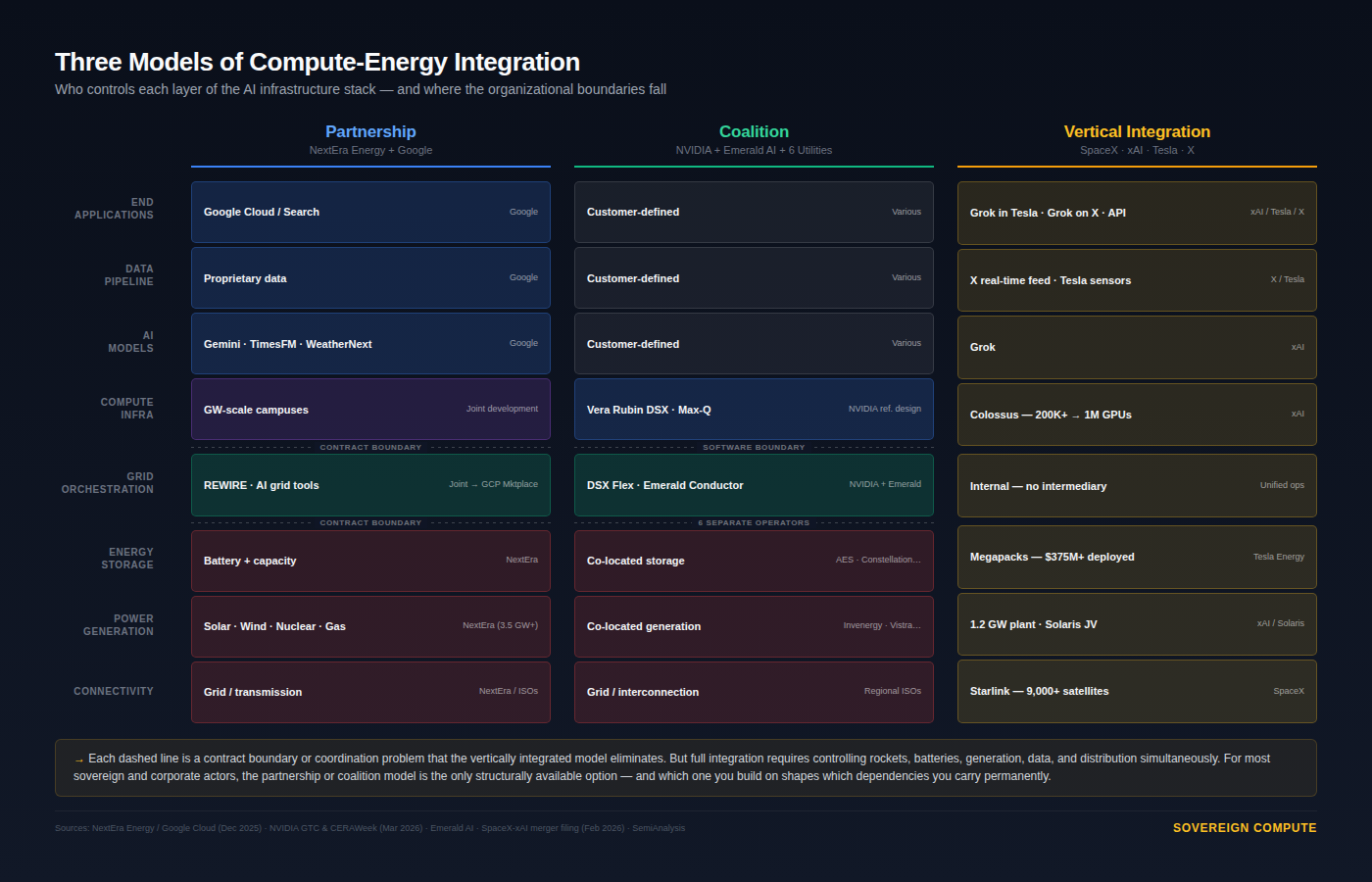

Utilities are building AI software. AI companies are building power plants. Three models of integration are forming: partnership, coalition, and full vertical control.

Eighteen months ago, utilities sold electricity and hyperscalers bought it. That boundary is dissolving. The AI buildout is producing vertically integrated compute-energy entities—companies that own the models, the data centers, the storage, and the generation under one roof. Three models of integration are forming: partnership, coalition, and full vertical control. Which one you build on determines which dependencies you carry.

In December, NextEra Energy and Google Cloud expanded their partnership to develop multiple gigawatt-scale data center campuses across the United States, each with co-located power generation purpose-built for the facility. NextEra already has roughly 3.5 GW in operation or contracted with Google. More interesting than the megawatts, though, is what’s happening to the utility itself. NextEra is taking Google’s AI models—TimesFM for time-series forecasting, WeatherNext for weather prediction—and applying them to its own grid operations through an internal initiative called Project REWIRE to run its power grid as a predictive system. Rather than simply adopting generic AI logic, NextEra is using these models (fine-tuned versions of them) on its own operational data and combining them with proprietary grid and optimization models. This allows the company to forecast demand, renewable output, equipment failures, and weather impacts in real time, improving reliability, reducing costs, and shifting from reactive operations to proactive decision-making—while also positioning this capability as a scalable product for other utilities.

The first commercial product is scheduled to launch on the Google Cloud Marketplace by mid-2026. Similar to how Amazon monetized its delivery network that was previously a cost center, NextEra is turning an internal operations system into a commercial software product, allowing it to sell predictive grid intelligence as a service. Petter Skantze, NextEra’s chief risk officer, oversees the program. A utility executive running an AI transformation that sells software on a hyperscaler’s platform: that is an organizational mutation rather than a procurement deal.

In parallel, at CERAWeek in March, NVIDIA and Emerald AI announced that six major US energy companies—AES, Constellation, Invenergy, NextEra, Nscale Energy & Power, and Vistra—would collaborate on flexible AI factories designed to operate as grid assets. NVIDIA’s Vera Rubin DSX reference design now includes DSX Flex, a software layer connecting compute workloads directly to power-grid services. Emerald’s Conductor platform demonstrated the ability to cut AI data center power consumption by 25% during grid stress events without degrading compute performance.

The math behind that 25% matters. Emerald estimates that if AI data centers can flex their power requirements by 25% for roughly 200 hours per year, that unlocks approximately 100 GW of stranded grid capacity across the US—more than the entire American nuclear fleet. The bottleneck, in other words, is not simply generation. It is also the inflexibility of the load. Utilities have been reluctant to approve large interconnections for AI facilities because a data center that draws 500 MW around the clock is a liability to grid stability. A data center that can throttle to 375 MW during peak stress, on software command, is something a utility can work with. That is why the orchestration software—the layer between compute and grid—has become the critical enabler for getting AI facilities connected faster.

Emerald was founded by Varun Sivaram, former CTO of ReNew Power in India and former senior US climate diplomat—a background that straddles exactly the energy-compute-sovereignty intersection. The coalition model accepts that compute and energy will remain under separate ownership and uses a shared software standard to coordinate between them.

It is worth noting what is taking shape here in aggregate.

NVIDIA, Emerald AI, Google, NextEra, and five other major US utilities are assembling a domestic compute-energy integration ecosystem where the software connecting AI workloads to grid services is becoming a proprietary American advantage. REWIRE, DSX Flex, and Emerald’s Conductor are not generic tools—they are trained on US grid data, validated against US interconnection rules, and sold through US cloud platforms. Countries building sovereign AI infrastructure today can import GPUs. They cannot easily import the orchestration layer that makes those GPUs grid-compatible at scale.

Then there is the Musk model.

When SpaceX acquired xAI in February for a combined $1.25 trillion, the headline was orbital data centers. The structure underneath is more telling. Across his companies, Musk now owns every layer of the AI infrastructure stack: compute (Colossus in Memphis, 200,000+ GPUs targeting one million), energy storage (Tesla Megapacks, over $375 million deployed at the Memphis site, with a 50 GWh/year Megafactory in Brookshire, Texas currently ramping up), power generation (a 1.2 GW dedicated plant, plus a joint venture with Solaris Energy carrying 1,140 MW in its orderbook), connectivity (Starlink, 9,000+ satellites), a proprietary data pipeline (X’s real-time feed), and end-user distribution (Grok embedded in Tesla vehicles).

Musk didn’t negotiate a PPA. He didn’t join an interconnection queue. He shipped a power plant across an ocean to Tennessee. That is vertical integration the technology industry hasn’t seen since AT&T owned the phones, the wires, and Bell Labs.

The three models sit on a spectrum of integration—and each carries different dependencies. In the partnership model, the orchestration layer (REWIRE, Emerald’s Conductor, NVIDIA’s DSX Flex) exists because the energy entity and the compute entity are separate organizations that need software to coordinate between them. In the coalition model, that software intermediary serves even more parties. In the vertically integrated model, the orchestration layer disappears entirely—there is no organizational boundary to mediate.

Build on the partnership model and you get speed, but you layer your hyperscaler’s AI deep into your energy operations. Build on the coalition model and you get vendor flexibility, but you depend on a reference architecture you don’t own. The fully integrated model eliminates those trade-offs—but it requires controlling rockets, batteries, generation, data, and distribution simultaneously, a portfolio that exists exactly once on earth.

For anyone making infrastructure or sovereignty decisions around AI, the organizational model matters as much as the technology specification. Signing a PPA with a utility is a different structural commitment than co-developing grid AI tools on a hyperscaler’s marketplace. And neither is the same as owning your own power plant. The question worth asking before your next capital allocation: does your current architecture create the optionality to deepen integration over time, or does it lock you into a permanent intermediary?

A note on independence: All opinions shared in this newsletter are my own and do not reflect the views of dmg events, ADIPEC, or any affiliated organizations. This is personal analysis, not institutional positioning.

Sources

• NextEra Energy / Google Cloud partnership press release, December 8, 2025

• NVIDIA and Emerald AI, CERAWeek announcement, March 23, 2026

• NVIDIA Vera Rubin DSX AI Factory reference design, GTC, March 16, 2026

• Emerald AI Conductor demonstration, EPRI DCFlex Initiative, Phoenix AZ (July 2025)

• Emerald AI $24.5M seed round (Radical Ventures, NVentures, CRV); $42.5M follow-on (October 2025)

• SpaceX–xAI merger, February 2, 2026 (CNBC, Bloomberg, TechCrunch)

• SemiAnalysis, “xAI’s Colossus 2,” September 2025

• NextEra Energy Q4 2025 earnings; January 2026 investor presentation; NEE leadership page (Skantze bio)