The AI Industry’s Regulatory Strategy: Change the Jurisdiction

From land to sea to orbit — AI infrastructure is engineering around regulation itself. And this weekend, the Gulf model got its first real stress test.

“The Gulf’s response to Iran’s retaliatory strikes demonstrated exactly why the region remains a serious contender for global AI infrastructure. ”

In the span of three weeks this February, the Pentagon airlifted a nuclear reactor to Utah on a C-17, a British consortium pitched floating nuclear power stations to be docked at US naval bases, and SpaceX filed with the FCC for an expanded constellation of up to 30,000 Gen2 nodes capable of dedicated edge-compute and orbital data processing. Meanwhile, in Malaysia’s Johor state, Chinese engineers were training AI models on rented Nvidia servers using physically transported hard drives, in data centers that don’t classify those servers as controlled items. These aren’t unrelated events. They are five expressions of a single structural logic: AI infrastructure is migrating to wherever the rules are thinnest — on land, at sea, in orbit, and across borders.

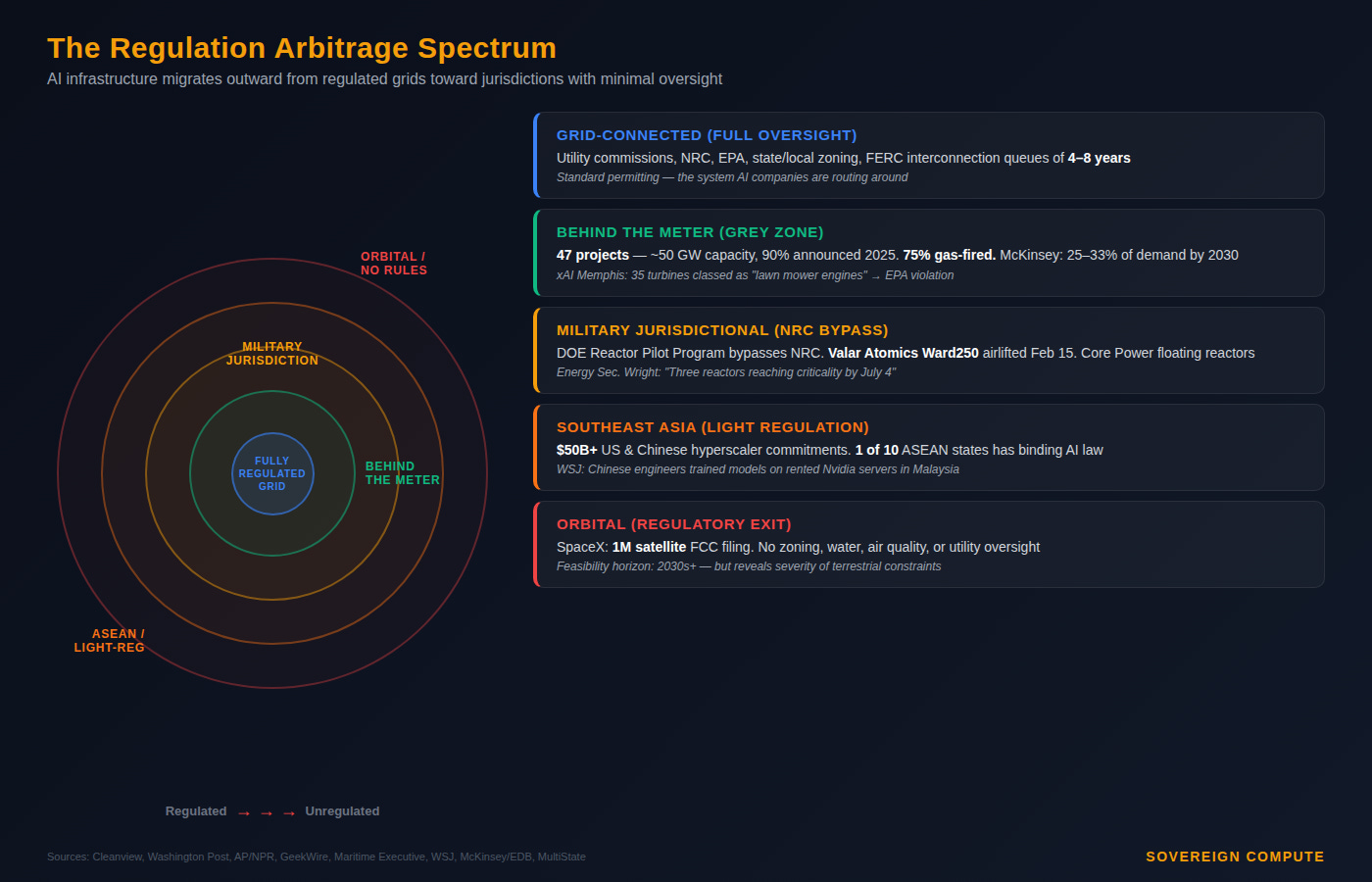

The pattern is regulation arbitrage — the systematic relocation of energy-intensive compute to jurisdictions where existing oversight either doesn’t apply or can be bypassed. In the United States, it runs along a spectrum. Behind-the-meter gas generation lets data centers skip interconnection queues and avoid utility commissions. Cleanview, an energy analytics firm, has identified 47 such projects representing approximately 50 GW of capacity, 90% announced in 2025 alone. McKinsey estimates 25–33% of all incremental data center demand through 2030 will be met behind the meter. One project in Texas will consume more power than all of Chicago without connecting to a single transmission line.

Further along the spectrum, military-jurisdictional nuclear bypasses the NRC entirely. The Valar Atomics airlift on February 15 — three C-17s carrying the Ward250 reactor to Utah, with Energy Secretary Chris Wright aboard — was explicitly framed as deregulation in action. The DOE’s Reactor Pilot Program routes licensing through the Department of Energy rather than the NRC, which can take a decade. Core Power, a London-based nuclear startup, is separately negotiating to dock floating reactors at military installations, drawing on naval designs that bypass civilian oversight. At the far end, SpaceX’s orbital filing proposes to exit the terrestrial regulatory stack entirely — no zoning, no water rights, no air quality review.

The Trump administration has made this logic explicit. David Sacks told a Davos audience in January that the president’s vision is to “let the AI companies become power companies.” The December 2025 executive order preempts state AI regulation; more than 300 state data center bills have been filed in the first six weeks of 2026, according to MultiState, but the federal government is simultaneously encouraging the industry to bypass state authority and generate its own power off-grid.

But the arbitrage isn’t only domestic. Southeast Asia is the international version of the same logic. Across six ASEAN economies, American and Chinese hyperscalers have committed over $50 billion in data center infrastructure, building side by side on the same grids under the same loose rules. Only one of ten ASEAN member states — Vietnam, as of December 2025 — has enacted the Digital Technology Industry Law, which mandates binding security standards for AI developers. The rest rely on non-binding governance guidelines with no enforcement mechanism. For hyperscalers, this means speed. For geopolitical strategists, it means something else: a theater where US export controls are structurally difficult to enforce.

But regulation exists for reasons that don’t disappear when you move the infrastructure. The xAI precedent in Memphis illustrates what happens when speed overrides oversight. Musk’s AI company built the Colossus data center in 122 days in 2024 by deploying up to 35 unpermitted methane gas turbines in a predominantly Black neighborhood that the American Lung Association had already rated “F” for ozone pollution. The EPA ruled in January 2026 that xAI violated the Clean Air Act. The NAACP filed suit. This is the template that other developers are now replicating — build the power plant on site, stay off the grid, minimize regulatory contact. The Washington Post reported on February 19 that dozens of off-grid projects planned across Texas, New Mexico, Pennsylvania, Wyoming, and Tennessee follow the same playbook.

This dynamic has no real parallel elsewhere — and that’s the point. Each major compute geography carries a structural vulnerability that defines how its AI infrastructure gets built, and each resolves the tension differently. The Gulf states — Saudi Arabia, the UAE, Qatar — don’t face the regulatory friction that drives American regulation arbitrage. State-led capital and sovereign energy eliminate community-level opposition by design. The Gulf’s structural advantage is speed, capital depth, and an accommodating regulatory environment. Its structural vulnerability is geographic: proximity to a neighbor with ballistic missile capability and a declared willingness to use it. That vulnerability moved from theoretical to concrete on February 28, when Iranian retaliatory strikes sent 137 missiles and 209 drones toward the UAE alone, shutting Dubai and Abu Dhabi airports, closing airspace across the Gulf, and triggering Strait of Hormuz closure warnings — the same corridor adjacent to submarine cable clusters carrying an estimated 17% of global internet traffic.

The Gulf’s response demonstrated exactly why the region remains a serious contender for global AI infrastructure. The UAE Ministry of Defense confirmed that air defenses intercepted the vast majority of incoming threats with high efficiency and reported no significant material damage to critical infrastructure. Cybersecurity systems remained operational around the clock. Supply chains held. Essential services continued. The UAE’s integrated emergency management system activated rapid-response protocols and maintained public order throughout. These are not the hallmarks of fragile states. They are the hallmarks of states that have invested heavily in both digital and physical security architectures — and the reason that commitments like Stargate UAE’s 5GW campus, Microsoft’s $15.2 billion investment, and KKR’s $5 billion data center partnership were made in the first place. As CSIS argued one day before the strikes, if compute truly is the new oil, the Gulf’s growing AI role will bind Washington to the region more tightly than current doctrine acknowledges.

India resolves the tension differently still. Its AI buildout operates within a permitting environment explicitly designed to attract data center investment — in February 2026, India announced a 20-year tax break for domestically built data centers. India’s vulnerability is not regulatory friction or geographic exposure but dependency: the world’s largest AI deployment infrastructure built entirely on foreign models and foreign chips, with Indian languages comprising less than 1% of global training data. The European Union is moving in the opposite direction, with the Energy Efficiency Directive requiring data center operators to report power and water usage, noncompliant facilities facing fines and restricted grid access.

The result, globally, is not a single system but a fracturing of the compute landscape into concentric rings of oversight. From fully regulated grid-connected facilities in Northern Virginia, to behind-the-meter gas plants operating in regulatory grey zones across Texas and Appalachia, to military-jurisdictional nuclear at sea, to the Gulf’s sovereign-capital model now stress-tested by kinetic conflict, to orbital infrastructure governed by spectrum licenses and little else. Each ring further from the center trades one form of accountability for another form of speed.

For infrastructure investors, energy executives, and policy strategists, the implication cuts both ways. The US model is most vulnerable to its own citizens at the ballot box. The Gulf model is most vulnerable to its geographic neighborhood — though this weekend demonstrated it has the defensive architecture to absorb the shock. The geography of compute is now shaped less by where the power is than by where the rules, the capital, and the security architectures align. The AI industry’s regulatory strategy is becoming, in effect: if you can’t change the rules, change the jurisdiction. The question is what gets lost in transit.

Editorial note: Gulf, Indian, and EU comparisons draw on the author’s “Three Models of Sovereign AI” framework (Sovereign Compute, Feb. 2026). The situation in Iran and the Gulf remains fluid; this analysis focuses on structural infrastructure implications, not the conflict itself. Orbital data center timelines remain speculative; the analysis focuses on what these proposals reveal about terrestrial constraints.

Sources: Washington Post (Feb. 19, 2026); Cleanview/Distilled Earth (Feb. 2026); AP/PBS/NPR (Feb. 21, 2026); GeekWire (Jan. 31, 2026); Maritime Executive (Feb. 23, 2026); CNBC (Jan. 16, 2026); Wall Street Journal (Jun. 2025); McKinsey/EDB “AI in Southeast Asia” (Feb. 2026); CSIS “Beyond the Matrix” (2025); MultiState (Feb. 20, 2026); CreditSights (Feb. 2026); Bain & Co Malaysia data center analysis; Stars and Stripes (Jan. 2, 2026).

A note on independence: All opinions shared in this newsletter are my own and do not reflect the views of dmg events, ADIPEC, or any affiliated organizations. This is personal analysis, not institutional positioning.