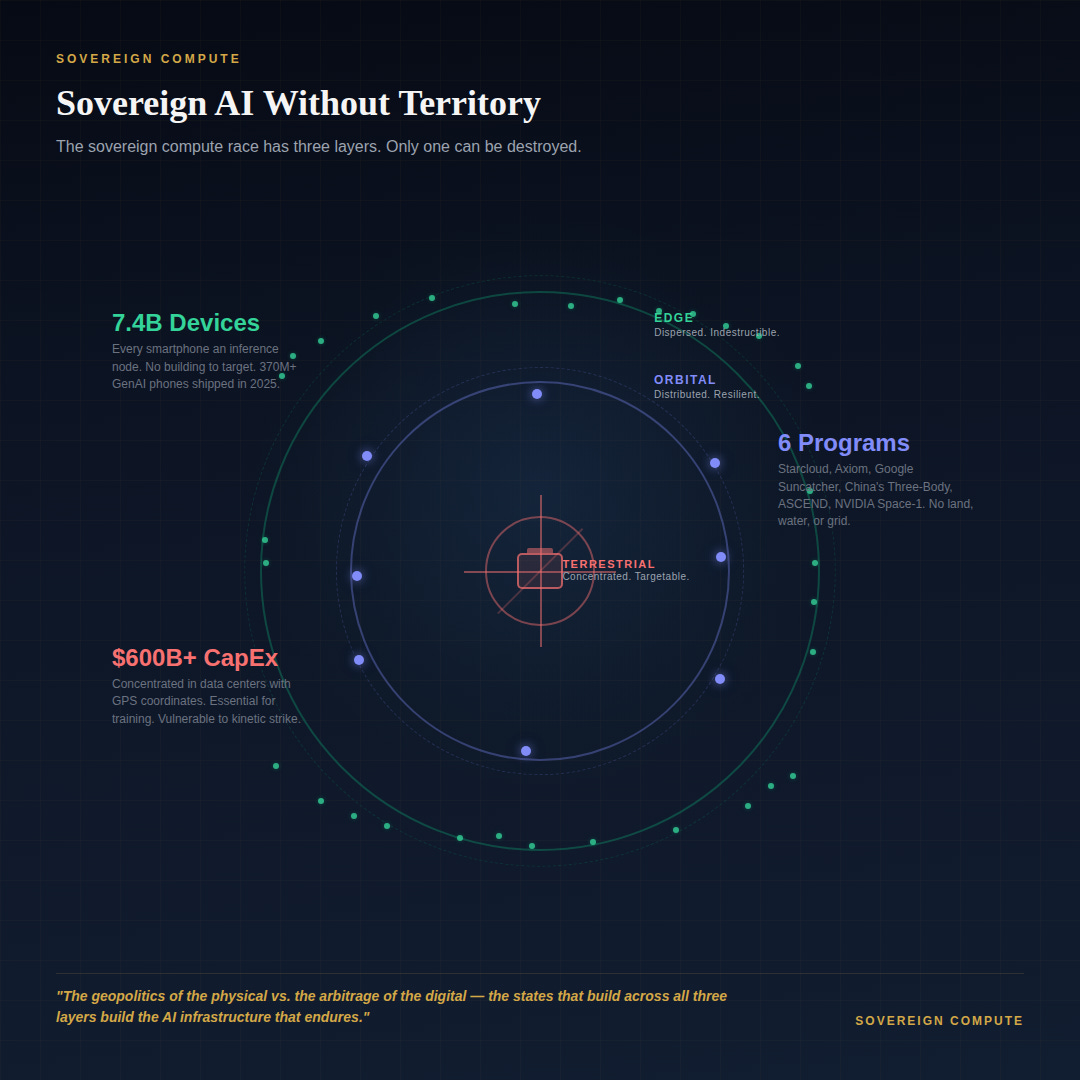

Sovereign AI Without Territory: How Orbit and Edge Could Break the Geography Trap

You Can Strike a Data Center. You Can't Strike a Billion Phones. Orbital compute in Space & distributed edge intelligence on Earth are emerging as escape vectors from the land-water-energy constraints

The states building the most data centers are building the 21st century's strategic infrastructure. The states building across all three layers — ground, orbit, and edge — are building the infrastructure that survives.

The sovereign compute orthodoxy runs in a straight line. Compute follows energy. Energy follows geography. Geography follows politics. Every major AI infrastructure strategy on Earth — the US hyperscaler buildout, the Gulf’s sovereign data center investments, India’s Digital India stack — operates within this binding logic. Training a frontier model consumes enough electricity to power a small city. Cooling a hyperscale cluster requires millions of gallons of water annually. Permitting a grid interconnection takes years. The physical layer is real, expensive, and slow, and it anchors the entire AI stack to specific coordinates on a map.

This framing is correct. It is also incomplete. The sovereign compute race as currently understood is a race to concentrate AI infrastructure in fixed, targetable locations — data centers with GPS coordinates, grid substations feeding 500 MW to a single campus, subsea cables landing at known points. The Iran-Gulf conflict demonstrated what this concentration means in practice: three AWS facilities struck, Hormuz shipping down 90–95%, and sovereign AI strategies built on the assumption of physical security suddenly facing the oldest problem in strategic infrastructure. Concentration is capability. Concentration is also vulnerability.

Two emerging vectors suggest that the geographic binding constraints of sovereign compute — while real for training — may not be permanent for inference, which is where AI meets the population. Call the current orthodoxy the geopolitics of the physical: the assumption that AI power is determined by who controls the most land, energy, and water in the most politically stable territory. The two vectors I’m going to describe represent something different — the arbitrage of the digital: the possibility that inference capacity can be distributed across domains where physical geography no longer binds. One points up. The other points out. Neither is a clean solution. Together, they redraw the map.

The first vector is orbital.

In January 2026, Axiom Space launched the first two orbital data center nodes to low Earth orbit. Weeks earlier, NVIDIA-backed Starcloud ran Google’s Gemma large language model on a satellite carrying an H100 GPU — the first time a frontier-class chip operated from orbit. NVIDIA followed at GTC with a full space computing platform: Vera Rubin modules, IGX Thor, and Jetson Orin, with Axiom, Kepler Communications, Planet Labs, and Starcloud as launch partners. Google’s Project Suncatcher envisions constellations of TPU-equipped satellites connected by free-space optical links, with prototype launches planned for early 2027. China’s Zhejiang Laboratory has already deployed a 12-satellite computing constellation carrying an 8-billion-parameter AI model, while Beijing-based BAIST plans a megawatt-scale orbital data center by 2035. Two weeks ago, Syntiant and Novi Space demonstrated real-time AI object detection on a commercial satellite — with model retraining in under 24 hours.

Space sidesteps the terrestrial binding problem almost entirely. Solar power in LEO is continuous and abundant — a solar panel in the right orbit produces up to eight times more energy than on Earth. There are no permitting delays, no water rights conflicts, no grid interconnection queues. Thermal dissipation, though, remains the ultimate engineering frontier. On Earth, data centers use convection and liquid cooling. In vacuum, the option is radiation, and radiating waste heat from a megawatt-scale cluster requires massive deployable radiator arrays. Small-scale inference modules like Jetson Orin handle this with passive radiation. The 5-gigawatt architectures Starcloud envisions will require radiator wings at an industrial scale — heat management as the “orbital water rights” of the next decade. The strategic logic extends further: if compute dispersal is a resilience strategy, the Moon’s south pole, and specifically the Peaks of Eternal Light, where near-continuous solar exposure meets permanently shadowed craters for thermal management, becomes a redundancy node. The Artemis core program’s infrastructure is about depth-of-field for critical systems.

Orbital compute also introduces a survivability profile that terrestrial infrastructure cannot match. Starlink demonstrated this in Ukraine: a distributed constellation of thousands of satellites has no single node to destroy. A 5,000-satellite compute constellation presents a fundamentally different target than three data centers in a desert. Russia’s development of nuclear anti-satellite capabilities — a weapon the US Department of Defense warned could render LEO unusable for an extended period — is the only theoretical counter. But an EMP that disables all satellites in a wide orbital zone would destroy Russian, Chinese, and commercial assets alongside the target, making it a mutual-destruction weapon, not a practical one.

The jurisdictional ambiguity adds another layer: who owns inference running on a satellite that crosses six sovereign territories per orbit? The Outer Space Treaty bans nuclear weapons in space but has no framework for compute sovereignty.

The second vector runs in the opposite direction — not up to orbit, but outward to the edge of the network, to every device in every pocket.

There are 7.4 billion active smartphones in the world today. India alone accounts for 1.2 billion smartphone connections. Mobile neural processing units now deliver throughput approaching data-center GPUs from 2017. Billion-parameter language models run in real time on flagship devices. IDC forecasts that over 370 million GenAI-capable smartphones shipped in 2025 — 30% of all shipments — rising to over 70% by 2029. Deloitte estimates inference workloads now account for roughly two-thirds of all AI compute, up from one-third in 2023. The on-device AI market is projected to grow from $33 billion in 2026 to $157 billion by 2033.

The strategic significance of edge compute is not mainly about latency or privacy. It is about distributing the physical substrate of sovereign AI to a target set so large it becomes indestructible. Iran can strike three data centers. It cannot strike 1.2 billion smartphones. No adversary can. The compute does not live in a building with coordinates — it lives in every pocket, on every wrist, behind every pair of smart glasses. Each device is a micro-sovereign compute node. Individually trivial. Collectively, an AI infrastructure that is physically unconcentrable and therefore physically undefeatable. The parallel to virtual power plants is direct: just as distributed solar and batteries aggregate into grid-scale capacity without centralized generation, distributed NPUs aggregate into national-scale inference capacity without centralized data centers.

India’s position in this framing shifts dramatically. In the current orthodoxy, India’s Intelligence layer — models, training capacity, frontier research — is its weakest dimension. But in a distributed inference world, India’s 1.2 billion connected devices become the largest sovereign compute substrate on Earth. The critical question becomes who controls the model weights on those devices. Apple’s Neural Engine runs Apple Intelligence. Qualcomm’s NPUs run whatever Qualcomm certifies. Google controls Android’s ML runtime across 3.9 billion devices globally. Edge AI may not democratize compute sovereignty — it may transfer it from territorial states to platform companies, making Apple and Google de facto sovereign compute providers for billions of people. This is why nation-state foundation models — India’s domestic efforts, the UAE’s Falcon family, Saudi Arabia’s SDAIA programs — become more strategically important in an edge-first world. Whoever controls the weights controls the intelligence at the edge, regardless of who manufactured the silicon.

Neither vector is a replacement for terrestrial infrastructure.

Training frontier models still requires concentrated, high-power, liquid-cooled GPU clusters. The data center buildout is necessary and serious. But training is not where most AI value will ultimately be captured. Inference is. And inference is splitting into three domains: terrestrial data centers, orbital compute, and edge devices. The sovereign compute race is no longer one-dimensional.

This reframing matters most for geographically constrained states. Singapore, the UAE, Israel suddenly have alternative paths to sovereign AI capability. The Gulf’s centralized data center investments are essential for training. Adding orbital partnerships and edge model ecosystems creates a three-layer resilience architecture where the ground layer can be struck, the orbital layer requires weapons that don’t yet practically exist, and the edge layer cannot be struck at all. A sovereign AI strategy built across all three layers is not just more capable. It is more survivable. And survivability, as the past two months have demonstrated, is not a theoretical concern.

The sovereign compute map most strategists are drawing — color-coded by data center capacity and grid megawatts — is a map of training infrastructure. The inference map has two additional layers that appear on no territorial chart: orbit and the edge. The states that see this early will not just build the most AI infrastructure, but the AI infrastructure that endures.

A note on independence: All opinions shared in this newsletter are my own and do not reflect the views of dmg events, ADIPEC, or any affiliated organizations. This is personal analysis, not institutional positioning.

Sources

NVIDIA, “NVIDIA Launches Space Computing, Rocketing AI Into Orbit,” GTC 2026

Axiom Space, “Orbital Data Centers,” January 2026

CNBC, “Nvidia-backed Starcloud trains first AI model in space,” December 2025

China Daily, “Chinese tech firms race to build AI computing capabilities in space,” December 2025

SatNews, “Syntiant and Novi Space Demonstrate Low-Power AI Inference in Orbit,” March 2026

Arms Control Association, “U.S. Warns of New Russian ASAT Program,” March 2024

Atlantic Council, “Russian nuclear anti-satellite weapons,” February 2024

DataReportal, Digital 2026 Global Overview

IDC, Worldwide Smartphone Market Forecast, August 2025

Coherent Market Insights, On-Device AI Market, 2026–2033

Future Today Strategy Group, Convergence Outlook 2026