AI Inference Changes the Sovereignty Map of the World.

The countries that matter for AI are about to shift from those with the biggest GPU clusters to those with the largest user populations.

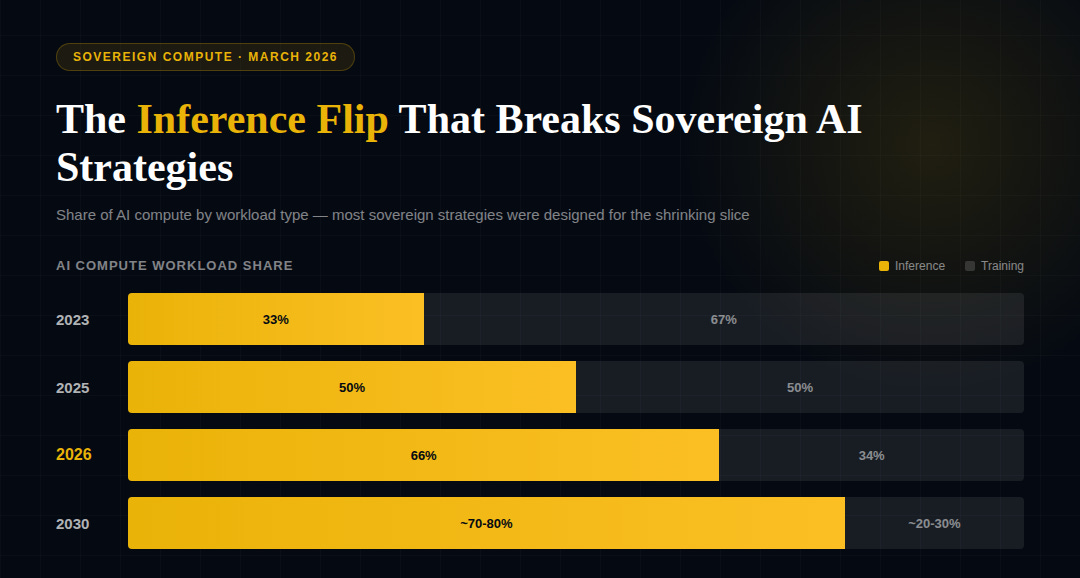

Nearly every sovereign AI strategy was designed for training. The workload that actually matters is about to flip.

Governments around the world now treat compute as strategic infrastructure. The UAE’s Stargate campus is targeting 5 gigawatts of sovereign compute in Abu Dhabi, backed by G42, OpenAI, Oracle, and NVIDIA. Saudi Arabia’s HUMAIN is scaling one of the world’s largest government-owned facilities at Hexagon in Riyadh, a 480-megawatt Tier IV complex. India is scaling from 38,000 to 100,000 public GPUs by December 2026. France, Japan, Canada, and more than 20 other governments have signed sovereign AI partnerships with NVIDIA. But the structural question embedded in all of this capital is: what workload are they building for?

The answer, almost universally, is training — massive centralized clusters designed to produce AI’s foundation models. That assumption is about to collide with a workload shift that changes the entire calculation. Deloitte estimates that inference already accounts for two-thirds of all AI compute in 2026, up from one-third in 2023. McKinsey projects inference will represent over half of all AI compute and 30–40% of total data center demand by 2030. The inference chip market is projected to exceed $50 billion in 2026, a shift that prompted NVIDIA’s $20 billion 'reverse acquihire' of Groq’s inference unit, a move designed to monopolize the low-latency 'token-shuffling' layer that now defines the global AI stack.

Lenovo’s 2026 industry analysis projects that the 80/20 split between training and inference spending is reversing. Training a model is a one-time cost that does not directly generate revenue. Serving it to hundreds of millions of users via inference is a continuous, scaling cost that generates scaling revenue — and it is now the dominant workload in the global AI stack.

This matters because training and inference have fundamentally different infrastructure requirements — and different vulnerability profiles. Training workloads are geographically more flexible: build a massive cluster wherever power is cheap and baseload is guaranteed. Inference workloads follow users. They require low-latency, metro-adjacent infrastructure close to population centers. A piece published last week in Communications of the ACM argued that edge inference is increasingly a sovereignty problem, not a latency problem; regulatory mandates under the EU AI Act, India’s DPDP Act, and Brazil’s LGPD are pushing compute to the edge faster than latency requirements alone would dictate. The implication is significant: India’s 1.4 billion potential revenue-generating inference endpoints (read, people) begin to look more strategically consequential than a multi-gigawatt training campus. The country that hosts the users generates the sustained demand that funds the ecosystem. A country that hosts only the training cluster can technically repurpose it for inference — the same GPUs handle both workloads — but at significantly worse cost-per-token than dedicated inference silicon such as Groq’s LPU or Amazon’s Inferentia, in a facility optimized for remote cheap power rather than metro-adjacent low latency, and without the user base that generates continuous demand. The hardware is not stranded, but the strategic logic behind its location, economics, and demand source is.

The current conflict in the Gulf has made this asymmetry more visible. Any transparent assessment of a sovereign compute strategy requires examining at least three dimensions simultaneously: the political trust architecture (alignment with technology partners and in-country legal and regulatory clarity), the intelligence layer (access to models, data, and talent), and the physical power base (energy, capital, grid capacity, and physical security). The war, is stress-testing the power dimension in real time, so long as Hormuz remains functionally closed. The IEA warned this week that the disruption surpasses the combined oil crises of 1973 and 1979. For Gulf sovereign AI strategies funded by energy export revenue, the disruption cuts both directions: the revenue financing the buildout, and the physical infrastructure that sits in a conflict zone where three commercial data centers have already been hit. An inference-centric world distributes this risk differently. Distributed nodes across multiple geographies are harder to disable than a single mega-cluster — though they require a trust framework, energy supply, and governance architecture in every jurisdiction where they operate. The fragility shifts from concentration to connective tissue.

For infrastructure investors and policy planners, the practical takeaway is clear. The sovereign AI strategies announced over the past 18 months were designed for a training-centric model. The workload mix is shifting toward inference faster than those plans are adapting. Countries with large, digitally active populations and regulatory frameworks mandating local processing — India, Indonesia, Brazil — may find themselves in stronger structural positions than countries that optimized for raw training throughput. The European Data Centre Association’s 2026 report signals that future capacity growth will be constrained by grid readiness, not capital. Sovereign compute strategies that can flex between centralized training and distributed inference, while maintaining trust and security across both modes, will prove more resilient than those locked into a single architecture. The sovereignty question is no longer just “can you train a model?”, but is increasingly: where do the inferences run, who governs them, and what happens when the workload your infrastructure was built for is no longer the workload that matters?

A note on independence: All opinions shared in this newsletter are my own and do not reflect the views of dmg events, ADIPEC, or any affiliated organizations. This is personal analysis, not institutional positioning.

Sources

McKinsey, “The Future of AI Workloads,” February 2026

Deloitte, “Technology, Media and Telecom Predictions 2026: AI Compute”

Lenovo, CIO Playbook / 2026 Industry Analysis

JLL, “2026 Global Data Center Outlook”

European Data Centre Association, 2026 State of European Data Centers

ACM, “Inference at the Edge Is a Sovereignty Problem, Not a Latency Problem,” March 2026

G42/OpenAI/Oracle, Stargate UAE announcement, May 2025; The National, January 2026

Saudi Press Agency, Hexagon Data Center launch, January 2, 2026

India PIB, AI Impact Summit GPU announcements, February 2026

CNBC/NPR, Iran ceasefire developments, March 25, 2026

IEA Director Fatih Birol, remarks March 23, 2026